A Intel está mudando o foco de suas CPUs de servidor para uma lista crescente de chips adjacentes que estão conduzindo uma mudança fundamental na computação para IA, na qual as respostas são derivadas de associações e padrões encontrados em dados.

Os aceleradores, como GPUs e chips de IA, ganharam destaque no recente evento Vision da Intel perto de Dallas. A Intel também está mudando o design do chip para ser modular, de modo que os aceleradores de IA possam ser compactados juntamente com suas CPUs Xeon.

O CEO Pat Gelsinger listou a IA como um dos pilares da futura linha de produtos da empresa. Ele invocou o ex-CEO Andy Grove ao prever que a IA – que precisa de níveis mais altos de desempenho de computação – será um ponto de inflexão importante nas decisões estratégicas da Intel.

A computação evoluiu desde a introdução do 4004 – que foi a primeira CPU da Intel a ser vendida em 1971 – e agora está disponível na ponta dos dedos por meio da nuvem e da borda, com aprendizado de máquina e IA fornecendo insights mais inteligentes, disse Gelsinger.

"Vemos essa explosão de casos de uso. Se você não está aplicando IA a todos os seus processos de negócios, está ficando para trás", disse Gelsinger. “Precisamos garantir que os humanos aproveitem a IA, mas também os humanos precisam garantir que a IA seja melhor e ética também”.

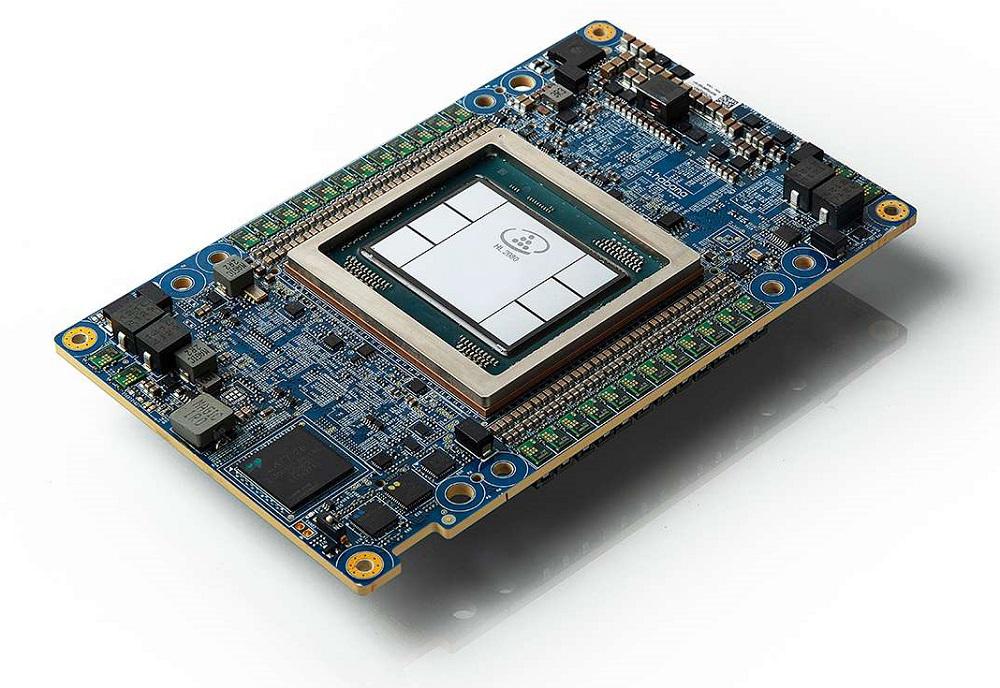

Cartão de mezanino Habana Labs Gaudi2 da Intel

A empresa depende fortemente de informações do Google Cloud, Amazon Web Services e Azure da Microsoft para conduzir sua estratégia de hardware e software de IA. A fabricante de chips anunciou o chip Gaudi-2 AI e novas unidades de processamento de infraestrutura (IPUs) projetadas com provedores de nuvem como Google e Microsoft. O chip Gaudi-2 é baseado em padrões como Ethernet, o que facilita a implantação em infraestruturas.

A Intel adicionou a linha de chips Gaudi AI por meio da aquisição da Habana Labs em 2019. Os chips Gaudi de primeira geração agora estão disponíveis por meio de instâncias no Amazon AWS, e esse relacionamento deu dicas à Intel sobre como projetar o Gaudi-2 para oferecer suporte a cargas de trabalho de hiperescalador, requisitos de segurança e escalabilidade.

“Aprendemos muito com o envolvimento com a Amazon”, disse Eitan Medina, diretor de operações da Habana Labs, durante uma coletiva de imprensa na Vision.

A Intel também deixou claro que não poderia depender apenas da venda de chips, mas também precisava de uma estratégia de software para sobrepor suas ofertas de hardware.

"A Intel tem tentado unificar o software em várias plataformas de hardware. Ela ainda está evoluindo", disse Kevin Krewell, analista da Tirias Research.

A fabricante de chips anunciou o Project Amber, um novo serviço que cria uma bolha segura na qual os clientes podem executar modelos de IA com segurança sem se preocupar com vazamento de dados para partes não autorizadas. A tecnologia autentica todos os pontos de conexão e será oferecida como um serviço de verificação em serviços de nuvem única ou multinuvem para proteger os dados.

O Projeto Amber exige que os serviços de hardware e software da Intel trabalhem juntos, e a tecnologia permitirá que as empresas executem modelos de aprendizado de máquina em um ambiente de nuvem seguro e confiável, disse Greg Lavender, diretor de tecnologia da Intel, em uma palestra.

"O custo de desenvolvimento de modelos de IA pode variar de US$ 10.000 a US$ 10 milhões. Proteger essa propriedade intelectual é uma alta prioridade para esses usuários e aplicativos", disse Lavender.

Lavender passou a falar sobre o OpenVINO, um kit de ferramentas de inferência de IA, sendo usado com o SGX (Software Guard Extensions) e outras tecnologias para proteger a IA na borda. O SGX fornece uma camada de proteção adicional para que terceiros não autorizados não tenham acesso aos dados.

A Intel também forneceu exemplos de como seu software e hardware de IA ajudam as empresas a cumprir os requisitos regulamentares. A Intel anunciou uma parceria com a BeeKeeperAI para que os provedores de assistência médica executem o aprendizado de máquina na borda, que geralmente pode ficar fora de ambientes confiáveis. A oferta conjunta, que está na nuvem Azure da Microsoft, ajuda os provedores de assistência médica a cumprir os requisitos regulatórios de privacidade de dados.

A tecnologia SGX da Intel permitiu à Bosch USA – que desenvolve tecnologias para carros autônomos – implantar modelos de treinamento em um ambiente privado. Os modelos de IA usam dados do mundo real e dados sintéticos gerados por máquina enquanto ocultam informações como dados faciais. A empresa também implantou modelos de IA para sistemas críticos de segurança em direção autônoma, que também possuem requisitos regulatórios, disse Tim Frasier, presidente de solução de computação entre domínios da Bosch, que falou no palco da conferência.

GPU Intel Artic Sound-M

A Intel também anunciou o processador gráfico Arctic Sound-M, projetado para implementação em data centers para IA, streams de vídeos e jogos em nuvem.

A GPU pode executar 150 trilhões de operações por segundo para processamento de vídeo e IA. “portanto, ao fazer esse streaming, você pode usar IA para entender o que está no vídeo”, disse Raja Koduri, vice-presidente executivo e gerente geral do Accelerated Computing Systems and Graphics Group da Intel, na conferência.

O vídeo está consumindo muito tráfego da Internet, mas também está sendo usado para aplicativos como a análise de dados capturados por câmeras.

"Também estamos executando mais análises de IA em fluxos de vídeo", disse Koduri, acrescentando "Esses novos casos de uso exigem nova aceleração de hardware porque são em tempo real com IA".

A GPU estará disponível em duas configurações: um modelo de 150 watts com 32 núcleos Xe e um modelo de 75 watts com 16 núcleos Xe. As GPUs possuem Xe Matrix Extensions (XMX) para aceleração de IA.

Arctic Sound-M oferece suporte a uma plataforma de desenvolvimento de software chamada OneAPI, que oferece suporte a uma ampla variedade de estruturas de programação de IA que incluem TensorFlow e Caffe.

O OneAPI é um ingrediente-chave para o sucesso da Intel em IA, disse Krewell, da Tirias Research, acrescentando que "a Nvidia CUDA ainda é o padrão ouro para pilhas de software de fornecedores".

Os novos chips de IA são essenciais para o futuro da Intel, que tenta alcançar a Nvidia, que lidera o processamento de IA. Para acomodar novos aceleradores, a Intel está adotando uma abordagem modular para o design de chips, na qual a empresa pode empacotar uma variedade de GPUs, ASICs ou FPGAs desenvolvidos internamente juntamente com os chips Xeon.

"A primeira coisa que é necessária é uma abordagem modular, porque diferentes soluções de IA são necessárias", disse Bob Brennan, vice-presidente e gerente geral dos serviços de fundição da Intel, em uma sessão de apresentação na Vision.

Brennan está liderando um esforço para tornar os chips Intel diversificados, trazendo suporte para aceleradores de IA baseados em arquiteturas RISC-V ou Arm. A empresa já oferece FPGAs para aplicações de IA e está trabalhando em chips neuromórficos inspirados no funcionamento do cérebro humano.

A Intel já possui um chip modular de codinome Ponte Vecchio, um acelerador que integra núcleos gráficos, processadores vetoriais, E/S, rede, mecanismos de matriz e outros núcleos de processamento em um único pacote. A empresa compartilhará mais detalhes sobre o chip na próxima conferência ISC High-Performance Computing, que começa no final deste mês.

"A modularidade começa com sua arquitetura. Quando você visualiza a arquitetura de seu computador e como vai construir seu SoC, precisa pensar sobre o particionamento potencial", disse Brennan.

A estratégia de hardware de IA da Intel também está vinculada a interfaces padrão.

"Se você comparar a arquitetura Gaudi, assumimos o compromisso de usar Ethernet porque essa é a interface mais usada que permitirá aos clientes escalar usando uma interface padrão em vez de proprietária", disse Medina, da Habana Labs.

A Intel também oferece suporte à interface UCIe (Universal Chiplet Interconnect Express) dentro do pacote do chip para conectar aceleradores de IA particionados, CPUs e outros coprocessadores.

No ano passado, a Intel criou um novo grupo de negócios chamado Accelerated Computing Systems and Graphics Group, liderado por Koduri, para se concentrar em GPUs, aceleradores e chips de IA. Os chips Xeon da Intel ainda dominam a infraestrutura do data center (com uma participação de 85% segundo estimativa da Intel), fornecendo uma enorme base instalada na qual a empresa espera vender seus chips de IA.

Mas a empresa teve sua cota de dificuldades com produtos de IA. A empresa comprou a startup de chips de IA Nervana em 2016, mas descontinuou a linha de produtos no início de 2020, logo após Gelsinger se tornar o CEO. Gelsinger redefiniu as operações da Intel com um foco renovado em fabricação, engenharia e pesquisa e desenvolvimento.

Gelsinger reconheceu que a Intel ainda está no processo de classificar suas múltiplas ofertas de IA, casos de uso e requisitos do cliente.

"Também temos o Arctic Sound. Haverá casos em que estará competindo com Gaudi. Temos que resolvê-los enquanto enfrentamos o cliente, porque eles têm pistas de natação muito sólidas ”, disse Gelsinger durante uma conferência de imprensa.

Relacionados

Categorias:AI/ML/DL Tags:Arctic Sound,Gaudi,Gaudi-2,GPUs,Habana Labs,Intel Sobre o autor: Tiffany TraderCom mais de Com uma década de experiência cobrindo o espaço de HPC, a Tiffany Trader é uma das vozes proeminentes que relatam a computação de escala avançada atualmente.